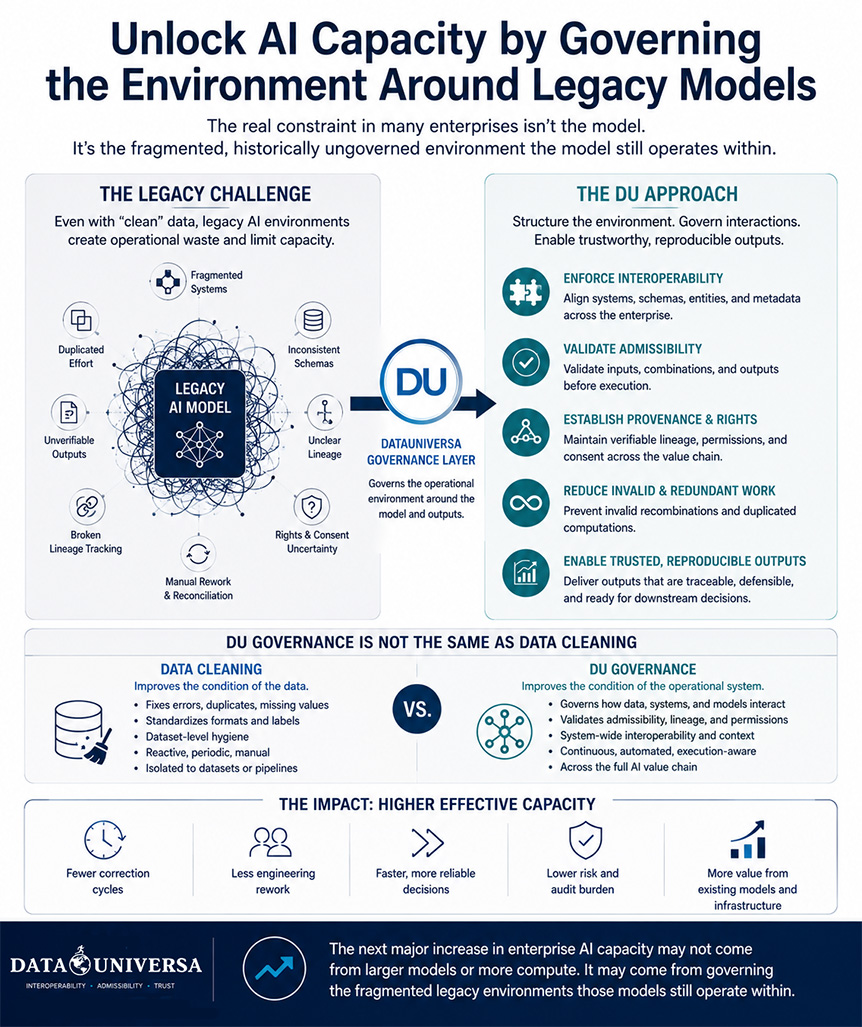

Most enterprise AI systems were not built on governed, interoperable data environments. They were trained years ago across fragmented datasets, inconsistent schemas, unclear lineage, and operational workflows that were never designed for long-term scalability.

That is becoming one of the largest hidden constraints on effective AI capacity.

A significant amount of enterprise compute and engineering effort is now spent compensating for legacy data environments surrounding these models: repeated reconciliation work, duplicated transformation pipelines, unverifiable outputs, broken lineage tracking, and manual correction workflows. In many cases, the issue is no longer the model itself. It is the operational environment the model still depends on.

This is also why the problem cannot be solved through “data cleaning” alone.

Cleaning data improves the condition of individual datasets. But many legacy enterprise AI environments are already operating on technically clean datasets while still suffering from operational fragmentation between systems. The largest capacity losses often occur after the data has already been cleaned — through incompatible schemas, repeated transformations, invalid recombinations, manual downstream validation, and unresolved lineage or permissions issues across environments.

This is where DataUniversa approaches governance differently.

While modern governance frameworks often focus on governance at ingestion for newly collected data, DU can also govern the operational layer surrounding legacy AI systems already in production. By enforcing interoperable inputs, validating admissibility before execution, aligning metadata structures, validating provenance and permissions, and reducing invalid or redundant computations, DU helps stabilize environments that were historically ungoverned.

The result is not simply better compliance or cleaner datasets. It is increased usable capacity from infrastructure enterprises already have deployed. Less operational waste, fewer correction cycles, improved reproducibility, and more trustworthy outputs all contribute to a more efficient AI environment without requiring immediate full-model replacement.

The next major increase in enterprise AI capacity may not come solely from larger models or more compute. It may come from governing the fragmented legacy environments those models still operate within.