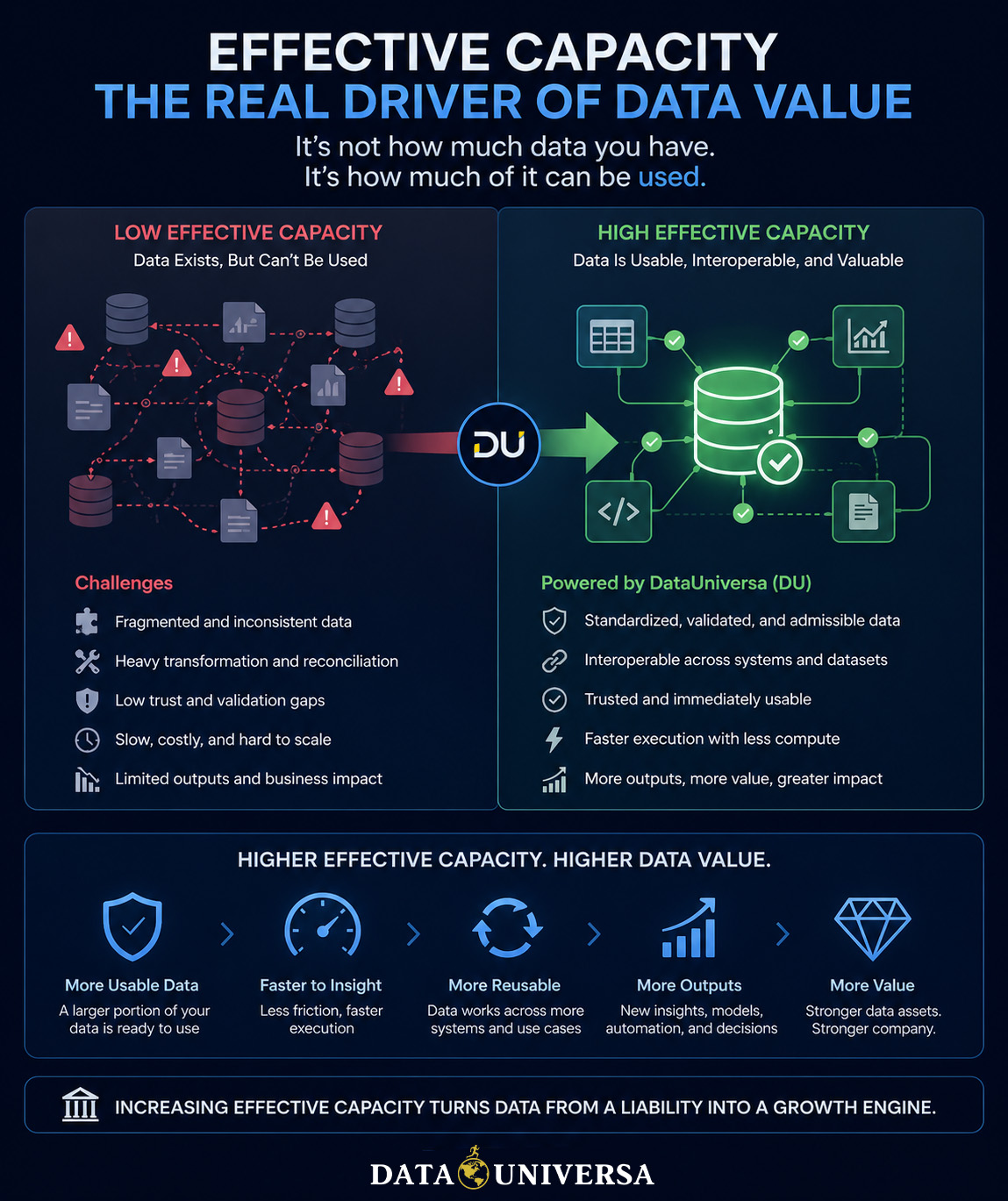

Most organizations still measure data value the wrong way.

They focus on how much data they have—how much is stored, collected, or processed. But volume has never been a reliable indicator of value. Large datasets often sit idle, fragmented across systems, difficult to combine, and expensive to use. What actually determines value is not how much data exists. It’s how much of it can be used effectively.

This is effective capacity.

What Effective Capacity Really Means

Effective capacity is the portion of your data environment that is immediately usable—data that can be trusted, combined, and executed without significant rework. In practice, this number is far lower than most companies assume. Data may exist, but if it requires heavy transformation, reconciliation, or validation before it can be used, it is not contributing to output. It is stored potential, not active value.

That gap between what exists and what can actually be used is where most organizations lose leverage.

The Hidden Cost of Fragmentation

When effective capacity is low, companies compensate with engineering effort and infrastructure. Teams spend time fixing inconsistencies, aligning schemas, and rebuilding pipelines just to make data usable. Compute is consumed on repeated transformations and inefficient workflows. Systems grow more complex, not because the problems are advanced, but because the data cannot operate together cleanly. The result is a data environment that looks large, but behaves small.

Increasing Effective Capacity Through DataUniversa

DataUniversa approaches this problem differently. Instead of optimizing workflows around fragmented data, it enforces structure at the point where data becomes usable. By standardizing how data is validated, structured, and allowed to interact, it removes the need for repeated transformation and reduces the risk of invalid execution. Data becomes interoperable by design, and admissibility ensures that only valid data paths are used. This doesn’t just improve efficiency—it changes how much of the data environment is actually available for use.

From Efficiency to Expansion

Most data strategies aim to do the same work faster.

Increasing effective capacity does something more important—it increases how much work can be done at all. As more data becomes immediately usable, it can participate in more operations: cross-dataset analysis, model training, decision systems, and monetization. The dataset itself hasn’t grown, but its ability to produce outcomes has. That is a direct expansion of capability.

Why This Increases Data Value

Data value is driven by output, not existence.

A dataset that can be reliably used across multiple contexts—combined, executed, and reused—has far greater value than one that remains isolated or difficult to access. As effective capacity increases, so does output reliability, speed, and flexibility. This is where valuation changes. Data stops being a passive asset and becomes an active contributor to the business.

The Company-Level Impact

At the organizational level, the shift is significant. Engineering time moves away from maintenance and toward creation. Compute is used more productively. Infrastructure scales more efficiently. Most importantly, data begins to function as a system rather than a collection of disconnected parts. Companies that achieve this don’t just manage data better—they extract more value from the same underlying assets.

The future of data value is not about collecting more.

It’s about activating more of what you already have.

Effective capacity is the metric that defines that shift. And by increasing it, DataUniversa doesn’t just improve performance—it increases the actual value of the data, and by extension, the company itself.